Port Bridge

Building “hybrid” cloud applications where parts of an an app lives up in a cloud infrastructure and other parts of the infrastructure live at a hosting site, or a data center, or even in your house ought to be simple – especially in this day and age of Web services. You create a Web service, make it accessible through your firewall and NAT, and the the cloud-hosted app calls it. That’s as easy as it ought to be.

Unfortunately it’s not always that easy. If the server sits behind an Internet connection with dynamically assigned IP addresses, if the upstream ISP is blocking select ports, if it’s not feasible to open up inbound firewall ports, or if you have no influence over the infrastructure whatsoever, reaching an on-premise service from the cloud (or anywhere else) is a difficult thing to do. For these scenarios (and others) our team is building the Windows Azure platform AppFabric Service Bus (friends call us just Service Bus).

Now – the Service Bus and the client bits in the Microsoft.ServiceBus.dll assembly are great if you have services can can be readily hooked up into the Service Bus because they’re built with WCF. For services that aren’t built with WCF, but are at least using HTTP, I’ve previously shown a way to hook them into Service Bus and have also demoed an updated version of that capability at Sun’s Java One. I’ll release an update for those bits tomorrow after my talk at PDC09 – the version currently here on my blog (ironically) doesn’t play well with SOAP and also doesn’t have rewrite capabilities for WSDL. The new version does.

But what if your service isn’t a WCF service or doesn’t speak HTTP? What if it speaks SMTP, SNMP, POP, IMAP, RDP, TDS, SSH, ETC?

Introducing Port Bridge

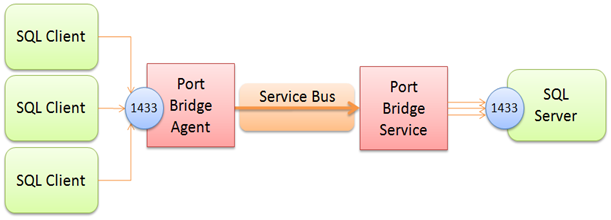

“Port Bridge” – which is just a descriptive name for this code sample, not an attempt at branding – is a point-to-point tunneling utility to help with these scenarios. Port Bridge consists of two components, the “Port Bridge Service” and the “Port Bridge Agent”. Here’s a picture:

The Agent’s job is to listen for and accept TCP or Named Pipe connections on a configurable port or local pipe name. The Service’s job is to accept for incoming connections from the Agent, establish a duplex channel with the Agent, and pump the data from the Agent to the actual listening service – and vice versa. It’s actually quite simple. In the picture above you see that the Service is configured to connect to a SQL Server listening at the SQL Server default port 1433 and that the Agent – running on a different machine, is listening on port 1433 as well, thus mapping the remote SQL Server onto the Agent machine as if it ran there. You can (and I think of that as to be more common) map the service on the Agent to any port you like – say higher up at 41433.

In order to increase the responsiveness and throughput for protocols that are happy to kill and reestablish connections such as HTTP does, “Port Bridge” is always multiplexing concurrent traffic that’s flowing between two parties on the same logical socket. When using Port Bridge to bridge to a remote HTTP proxy that the Service machine can see, but the Agent machine can’t see (which turns out to be the at-home scenario that this capability emerged from) there are very many and very short-lived connections being tunneled through the channel. Creating a new Service Bus channel for each of these connections is feasible – but not very efficient. Holding on to a connection for an extended period of time and multiplexing traffic over it is also beneficial in the Port Bridge case because it is using the Service Bus Hybrid connection mode by default. With Hybrid, all connections are first established through the Service Bus Relay and then our bits do a little “NAT dance” trying to figure out whether there’s a way to connect both parties with a direct socket – if that works the connection gets upgraded to the most direct connections in-flight. The probing, handshake, and upgrade of the socket may take 2-20 seconds and there’s some degree of luck involved to get that direct socket established on a very busy NAT – and thus we want to maximize the use of that precious socket instead of throwing it away all the time.

That seems familiar?!

You may notice that SocketShifter (built by our friends at AWS in the UK) is quite similar to Port Bridge. Even though the timing of the respective releases may not suggest it, Port Bridge is indeed Socketshifter’s older brother. Because we couldn’t make up our mind on whether to release Port Bridge for a while, I had AWS take a look at the service contract shown below and explained a few principles that I’m also explaining here and they had a first version of Socketshifter running within a few hours. There’s nothing wrong with having two variants of the same thing.

How does it work?

Since I’m publishing this as a sample, I obviously need to spend a little time on the “how”, even I’ll limit that here and will explain that in more detail in a future post. At the heart of the app, the contract that’s used between the Agent and the Service is a simple duplex WCF contract:

[ServiceContract(Namespace="n:", Name="idx", CallbackContract=typeof(IDataExchange), SessionMode=SessionMode.Required)] public interface IDataExchange { [OperationContract(Action="c", IsOneWay = true, IsInitiating=true)] void Connect(string i); [OperationContract(Action = "w", IsOneWay = true)] void Write(TransferBuffer d); [OperationContract(Action = "d", IsOneWay = true, IsTerminating = true)] void Disconnect(); }

There’s a way to establish a session, send data either way, and close the session. The TransferBuffer type is really just a trick to avoid extra buffer copies during serialization for efficiency reasons. But that’s it. The rest of Port Bridge is a set of queue-buffered streams and pumps to make the data packets flow smoothly and to accept inbound sockets/pipes and dispatch them out to the proxied services. What’s noteworthy is that Port Bridge doesn’t use WCF streaming, but sends data in chunks – which allows for much better flow control and enables multiplexing.

Now you might say You are using a WCF ServiceContract? Isn’t that using SOAP and doesn’t that cause ginormous overhead? No, it doesn’t. We’re using the WCF binary encoder in session mode here. That’s about as efficient as you can get it on the wire with serialized data. The per-frame SOAP overhead for net.tcp with the binary encoder in session mode is in the order of 40-50 bytes per message because of dictionary-based metadata compression. The binary encoder also isn’t doing any base64 trickery but treats binary as binary – one byte is one byte. Port Bridge is using a default frame size of 64K (which gets filled up in high-volume streaming cases due to the built-in Nagling support) and so we’re looking at an overhead of far less than 0.1%. That’s not shabby.

How do I use it?

This is a code sample and thus you’ll have to build it using Visual Studio 2008. You’ll find three code projects: PortBridge (the Service), PortBridgeAgent (the Agent), and the Microsoft.Samples.ServiceBus.Connections assembly that contains the bulk of the logic for Port Bridge. It’s mostly straightforward to embed the agent side or the service side into other hosts and I’ll show that in a separate post.

Service

The service’s exe file is “PortBridge.exe” and is both a console app and a Windows Service. If the Windows Service isn’t registered, the app will always start as a console app. If the Windows Service is registered (with the installer or with installutil.exe) you can force console-mode with the –c command line option.

The app.config file on the Service Side (PortBridge/app.config, PortBridge.exe.config in the binaries folder) specifies what ports or named pipes you want to project into Service Bus:

<portBridge serviceBusNamespace="mynamespace" serviceBusIssuerName="owner" serviceBusIssuerSecret="xxxxxxxx" localHostName="mybox"> <hostMappings> <add targetHost="localhost" allowedPorts="3389" /> </hostMappings> </portBridge>

The serviceBusNamespace attribute takes your Service Bus namespace name, and the serviceBusIssuerSecret the respective secret. The serviceBusIssuerName should remain “owner” unless you know why you want to change it. If you don’t have an AppFabric account you might not understand what I’m writing about: Go make one.

The localHostName attribute is optional and when set, it’s the name that’s being used to map “localhost” into your Service Bus namespace. By default the name that’s being used is the good old Windows computer-name.

The hostMappings section contains a list of hosts and rules for what you want to project out to Service Bus. Mind that all inbound connections to the endpoints generated from the host mappings section are protected by the Access Control service and require a token that grants access to your namespace – which is already very different from opening up a port in your firewall. If you open up port 3389 (Remote Desktop) through your firewall and NAT, everyone can walk up to that port and try their password-guessing skills. If you open up port 3389 via Port Bridge, you first need to get through the Access Control gate before you can even get at the remote port.

New host mappings are added with the add element. You can add any host that the machine running the Port Bridge service can “see” via the network. The allowedPorts and allowedPipes attributes define with TCP ports and/or which local named pipes are accessible. Examples:

- <add targetHost="localhost" allowedPorts="3389" /> project the local machine into Service Bus and only allow Remote Desktop (3389)

- <add targetHost="localhost" allowedPorts="3389,1433" /> project the local machine into Service Bus and allow Remote Desktop (3389) and SQL Server TDS (1433)

- <add targetHost="localhost" allowedPorts="*" /> project the local machine into Service Bus and only allow any TCP port connection

- <add targetHost="localhost" allowedPipes="sql/query" /> project the local machine into Service Bus and allow no TCP connections but all named pipe connections to \.\pipes\sql\query

- <add targetHost="otherbox" allowedPorts="1433" /> project the machine “otherbox” into Service Bus and allow SQL Server TDS connections via TCP

Agent

The agent’s exe file is “PortBridgeAgent.exe” and is also both a console app and a Windows Service.

The app.config file on the Agent side (PortBridgeAgent/app.config, PortBridgeAgent.exe.config in the binaries folder) specifies which ports or pipes you want to project into the Agent machine and whether and how you want to firewall these ports. The firewall rules here are not interacting with your local firewall. This is an additional layer of protection.

<portBridgeAgent serviceBusNamespace="mysolution" serviceBusIssuerName="owner" serviceBusIssuerSecret="xxxxxxxx"> <portMappings> <port localTcpPort="13389" targetHost="mymachine" remoteTcpPort="3389"> <firewallRules> <rule source="127.0.0.1" /> <rule sourceRangeBegin="10.0.0.0" sourceRangeEnd="10.255.255.255" /> </firewallRules> </port> </portMappings> </portBridgeAgent>

Again, the serviceBusNamespace attribute takes your Service Bus namespace name, and the serviceBusIssuerSecret the respective secret.

The portMappings collection holds the individual ports or pipes you want to bring onto the local machine. Shown above is a mapping of Remote Desktop (port 3389 on the machine with the computer name or localHostName ‘mymachine’) to the local port 13389. Once Service and Agent are running, you can connect to the agent machine on port 13389 using the Remote Desktop client – with PortBridge mapping that to port 3389 on the remote box.

The firewallRules collection allows (un-)constraining the TCP clients that may connect to the projected port. By default, only connections from the same machine are permitted.

For named pipes, the configuration is similar, even though there are no firewall rules and named pipes are always constrained to local connectivity by a set of ACLs that are applied to the pipe. Pipe names must be relative. Here’s how a named pipe projection of a default SQL Server instance could look like:

<port localPipe="sql/remote" targetHost="mymachine" remotePipe="sql/query"/>

There’s more to write about this, but how about I let you take a look at the code first. I’ve also included two setup projects that can easily install Agent and Service as Windows Services. You obviously don’t have to use those.

[Updated archive (2010-06-10) fixing config issue:]

PortBridge20100610.zip (90.99 KB)